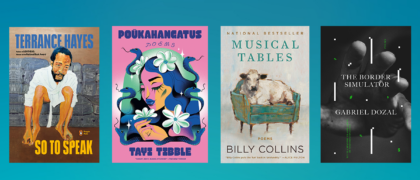

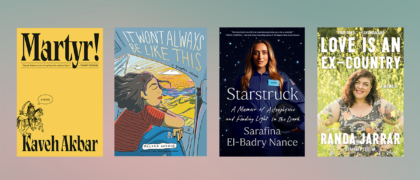

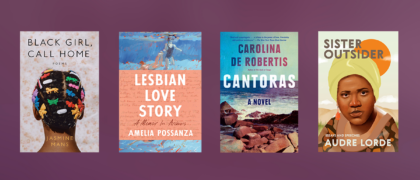

Books for National Poetry Month

For National Poetry Month in April, we are sharing poetry collections and books about poetry by authors who have their own stories to tell. These poets delve into history, reimagine the present, examine poetry itself, provide insight on grief and reflection—from traditional poems many know and love to poems and voices that are new and

Read more